AI experimentation is a balancing act: the thrill of pushing boundaries vs. the responsibility to do it safely. It’s easy to get caught up in the possibilities—but it’s the precautions that matter most.

Yesterday, I posted about trying out Clawdbot, and the discussion that followed brought up important questions about responsible experimentation. Some raised thoughtful questions about sandboxing practices. Others shared their approach—sticking to tools on test platforms while watching autonomous agents from a distance, considering them “a step too far” for production desktops. Both perspectives highlight different ways to think about risk.

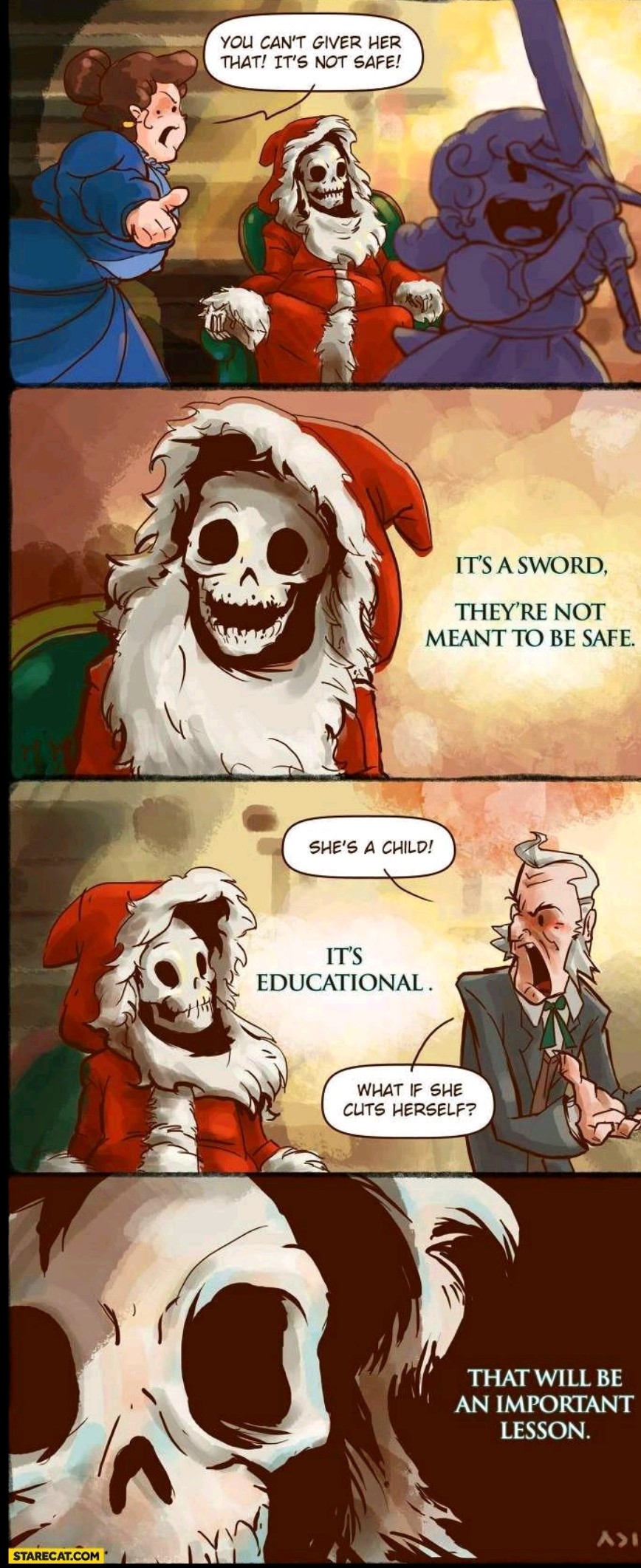

The Hogfather Wisdom

Someone shared a comic from Terry Pratchett’s Hogfather that captures this perfectly:

“You can’t give her that! It’s not safe!”

“It’s a sword. They’re not meant to be safe.”

“She’s a child!”

“It’s educational.”

“What if she cuts herself?”

“That will be an important lesson.”

This resonates with how I think about bleeding-edge AI tools. Autonomous agents with access to your email, calendar, and files aren’t meant to be safe in the sense of risk-free. They’re powerful tools that require respect, preparation, and the willingness to learn from mistakes—ideally small, contained ones.

A Safer Approach

When testing tools like Clawdbot, here’s what you can do to be safer:

Limit the blast radius. Run tools in an isolated WSL2 instance, not your main development environment. M365 test tenants instead of your work account. Test credentials for everything. If something breaks, it stays contained.

A separate desktop or “alter-ego setup” isn’t overkill—it’s smart design. When you’re giving an agent access to years of work and relationships, containment isn’t paranoia. It’s professionalism.

Sandbox everything. Test credentials only. Restricted network access. Monitor and log all sessions. Clawdbot has solid documentation on their sandboxing approach—they can run tool execution inside Docker containers to limit what a buggy or compromised agent can do. Their gateway stays on the host, but file operations, commands, and browser automation run isolated. Check out their sandboxing docs for the technical details.

Understand what you’re granting access to. Don’t just click “yes” to OAuth permission screens. Read what scopes you’re granting. If it’s open source, grep the repository before running it—look for credential handling, network calls, file system access. Know your threat model. What’s the worst case if this agent is compromised? Design your sandbox accordingly.

The Paradox

The paradox is that what makes these tools powerful—their deep integration into your workflow—is exactly what makes them risky. You can’t be casual about it.

Successful experimentation with autonomous agents requires careful planning. Test tenants. Separate VMs. Actually reading permissions. Grepping repos. Logging what agents do.

Moving Forward

Like the Hogfather’s lesson about the sword, autonomous AI tools aren’t meant to be risk-free. But that doesn’t mean reckless. It means thoughtful, contained experimentation where “cutting yourself” teaches you something valuable without doing real damage.

The technology is too powerful to wing it. But it’s also too interesting not to explore.

AI is evolving fast, and nobody has all the answers yet—so let’s build this playbook together. What’s worked for you so far? Any lessons or mistakes you want to share?

If you’re experimenting with autonomous agents, I’d like to hear from you. What’s your setup? What’s working? What broke that you didn’t expect?

Connect with me on LinkedIn to continue the conversation.